Most organisations certified to ISO/IEC 42001:2023 performed their AI impact assessment once. It sits in the AIMS document register dated to initial deployment, version-controlled, approved, and untouched. The AI system it describes has retrained twice, ingested new data sources, and expanded to a user population the original assessment never contemplated. The assessment describes a system that no longer exists.

Clause 8.2 of ISO/IEC 42001:2023 requires AI risk assessments and impact assessments to be performed “at planned intervals or when significant changes are proposed or occur.” The phrase “significant changes” carries the full weight of the reassessment obligation — and the standard does not define it.

That undefined threshold is where most AIMS implementations quietly fail.

What ISO 42001 Clause 6.1.2 and 6.1.4 Actually Require

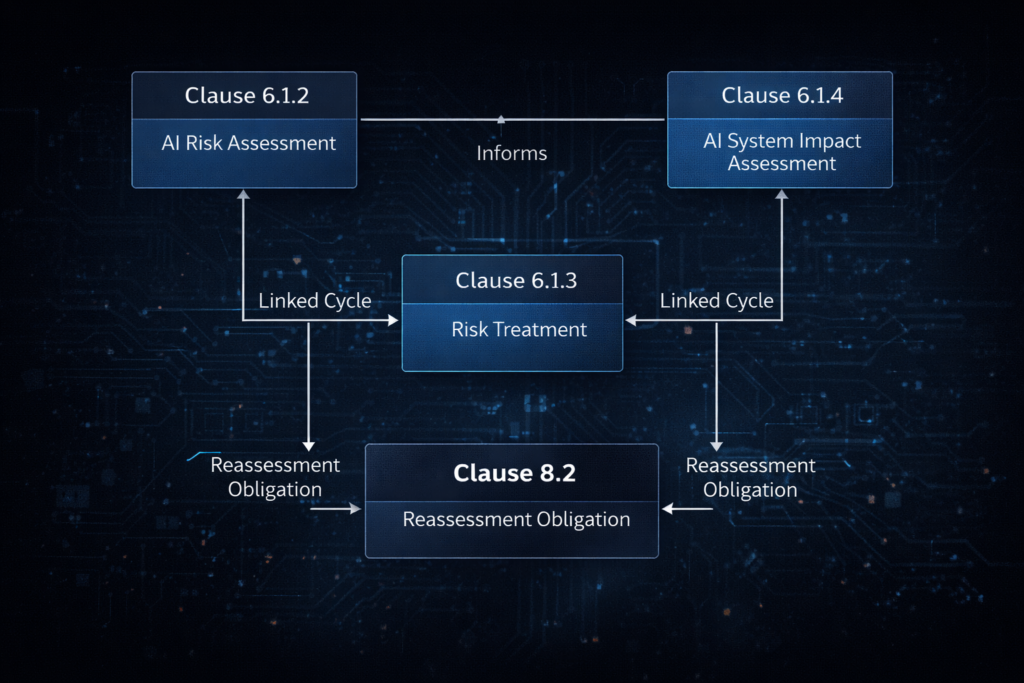

ISO/IEC 42001:2023 splits AI risk governance across two parallel assessment obligations that must operate as a linked cycle.

Clause 6.1.2 requires a formal AI risk assessment process — identification, analysis, evaluation, and prioritisation of AI-related risks. The process must produce consistent, comparable results across assessment cycles. Clause 8.2 operationalises this by mandating reassessment at planned intervals and when significant changes occur. Documented results must be retained for each cycle, not just the first.

Clause 6.1.4 requires a separate AI system impact assessment — an evaluation of consequences for individuals, groups, and societies. This covers ground that technical risk assessment misses: bias effects on specific populations, accessibility failures, consequences that shift when the system deploys into a new jurisdiction. Annex A control A.5.2 specifies the trigger conditions more concretely than the core clauses: major system updates, data source shifts, business function expansion, regulatory changes, and incidents all mandate reassessment.

The two assessments feed each other. Impact assessment outputs inform risk treatment decisions under Clause 6.1.3. Risk assessment findings shape the scope of the next impact assessment cycle. Neither functions as a standalone document — they are linked processes that must move together when the AI system changes.

Clause 6.1.3 reference reflects mapped standard requirement. Verify against current edition before audit use.

Where AI Impact Assessment Implementations Fail

The dominant failure pattern is classification inaction. Organisations understand that significant changes require reassessment. They accept the principle during implementation. Then they never formally define what constitutes a significant change in their AIMS documentation.

Without that definition, every model retrain passes without a documented classification decision. Every data refresh, every scope extension, every prompt-engineering change that alters decision logic — none of these events trigger a formal review because the mechanism to classify them does not exist. The AI impact assessment remains permanently dated to initial deployment.

Auditors encountering this pattern find a single assessment record with no subsequent versions and no documented rationale explaining why reassessment was not required. GloCert International flags this as a major nonconformity candidate: impact assessments not conducted for all in-scope AI systems, or assessments that do not address impacts on affected individuals after system changes. No version history, no defence.

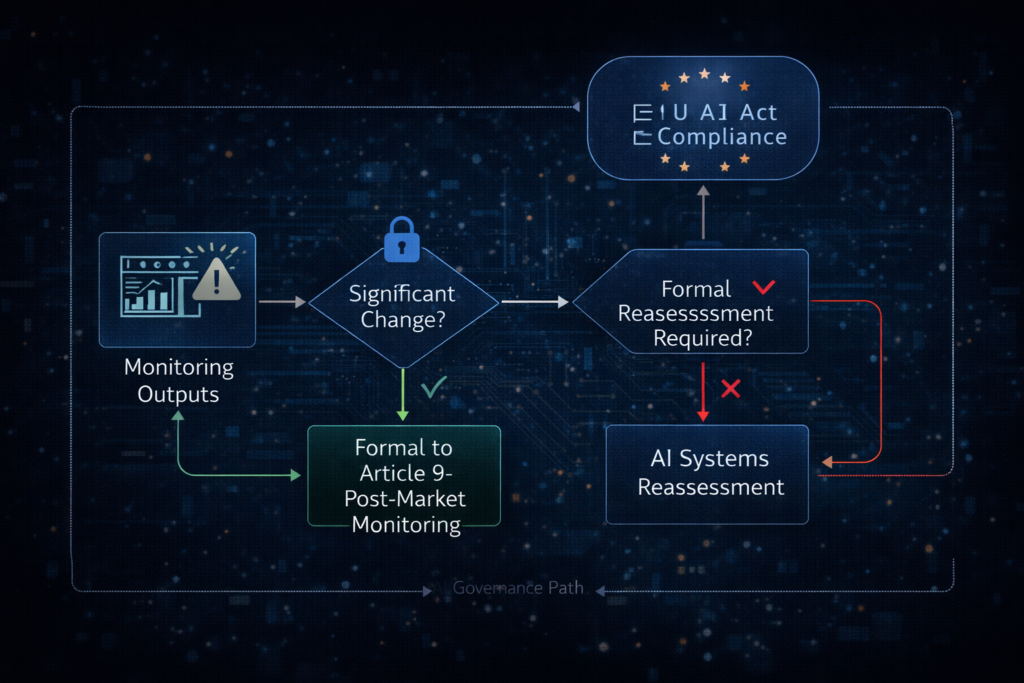

A secondary failure compounds the first. Clause 9.1 monitoring often detects model drift or performance degradation — the data exists in dashboards and logs. But no documented escalation path connects monitoring outputs to the Clause 6.1.2/6.1.4 reassessment cycle. The two processes run in separate silos. Drift data that should trigger a “significant change” classification review sits in an operational dashboard and goes nowhere near the risk governance function.

Why August 2026 Makes This Urgent

Organisations using ISO 42001 certification as their EU AI Act governance framework face a specific problem. EU AI Act Article 9 requires a risk management system for high-risk AI systems that operates as a continuous iterative process planned and run throughout the entire lifecycle, with regular systematic review and updating. Article 9 explicitly links risk reassessment to post-market monitoring data collected under Article 72 — creating a near-continuous trigger, not an interval-based one.

ISO/IEC 42001:2023 Clause 8.2 permits a static schedule if no significant changes are defined. Article 9 does not. The two frameworks are compatible only if the organisation actively bridges the gap — calibrating its AIMS reassessment intervals to post-market monitoring cycles so that Article 9’s continuous-iterative obligation is satisfied through the AIMS rather than through a parallel process.

The enforcement date for high-risk AI system obligations under Articles 9–17 is 2 August 2026. Penalties for non-compliance range up to €35 million or 7% of global annual turnover for the most serious violations. An ISO 42001 certificate that demonstrates a static, single-point assessment cycle will not satisfy Article 9 for Annex III high-risk systems.

No CB or accreditation body has published specific guidance defining trigger conditions or minimum frequency for AI impact assessment refresh when underlying models change. Auditors default to their own interpretation of Clause 8.2. Organisations that define the threshold themselves and produce evidence of consistent application are better positioned than those waiting for CB guidance that does not yet exist.

Three Steps to Close the Reassessment Gap Before Surveillance

1. Define “significant change” in your AIMS documentation. Create an AI Model Change Classification Policy that specifies threshold categories: retraining on new or updated data, fine-tuning or prompt changes altering decision logic, deployment scope expansion, new data source integration, monitoring outputs exceeding defined drift thresholds, and regulatory or legal changes affecting operating context. Assign a classification owner accountable for each determination. Annex A.5.2 already lists the trigger categories. Build your policy around them.

2. Retrofit a change register for existing AI systems. For each in-scope system, document the date of the original impact assessment, every model or deployment change since that date, and a recorded determination for each change event — either triggering reassessment, or documenting — with classification owner sign-off — why reassessment was not required. Where changes occurred without documented rationale, conduct a retrospective assessment before the surveillance audit and log it as a corrective action under Clause 10.

3. Connect Clause 9.1 monitoring to the reassessment cycle. Modify your monitoring framework to include a documented escalation path: when metrics exceed defined drift or performance thresholds, a formal “significant change” classification review is initiated. The output either triggers a Clause 6.1.2/6.1.4 reassessment or produces a documented “no reassessment required” determination with rationale. For EU AI Act high-risk systems, align this cycle with Article 72 post-market monitoring intervals.

The Single Thing to Remember

An AI impact assessment is not a deployment gate. It is a lifecycle document. If your AIMS cannot answer the question “what changed since the last assessment, and who decided it didn’t require reassessment?” — you have a structural nonconformity that will surface at surveillance. Define the threshold. Build the register. Connect the monitoring. The standard requires it. The EU AI Act demands it. And your auditor will ask for it.

About AEC International

AEC International provides ISO certification, training, and consultancy services at the intersection of AI governance, information security, and management system implementation. We support organisations across industries in achieving and maintaining ISO certification — from gap analysis and implementation through audit preparation and continual improvement.

Learn more: www.aec.llc