Edit intensity: Light — primary keyword inserted in opening paragraph, H2 keyword reinforcement on two headings, H3 subheadings added under Sector-Specific Variations, minor keyword placement in three body paragraphs. No structural, tonal, or argumentative changes.

Your organisation certified to ISO 50001:2018 three years ago. Since then, you replaced two compressors, added a production line, and shifted to a three-day office occupancy model. Your energy performance indicators still reference the original baseline. The trend line looks good — energy intensity is down 12% — but the operational conditions that produced that number bear almost no resemblance to the conditions your baseline was built on. That 12% is an artefact, not evidence. You have an ISO 50001 energy baseline drift problem, and it will surface under scrutiny.

When your CSRD limited assurance provider asks how the energy intensity ratio in your ESRS E1-5 disclosure was calculated, the methodology trail will dead-end at a baseline that no longer describes your operations. This is not an energy management housekeeping problem. It is an assurance risk.

What ISO 50001:2018 Clauses 6.3, 6.4, and 6.5 Actually Require

Three clauses form the evidential chain for energy performance claims under ISO 50001:2018. They operate as a dependency sequence — get the first one wrong and the other two inherit the error.

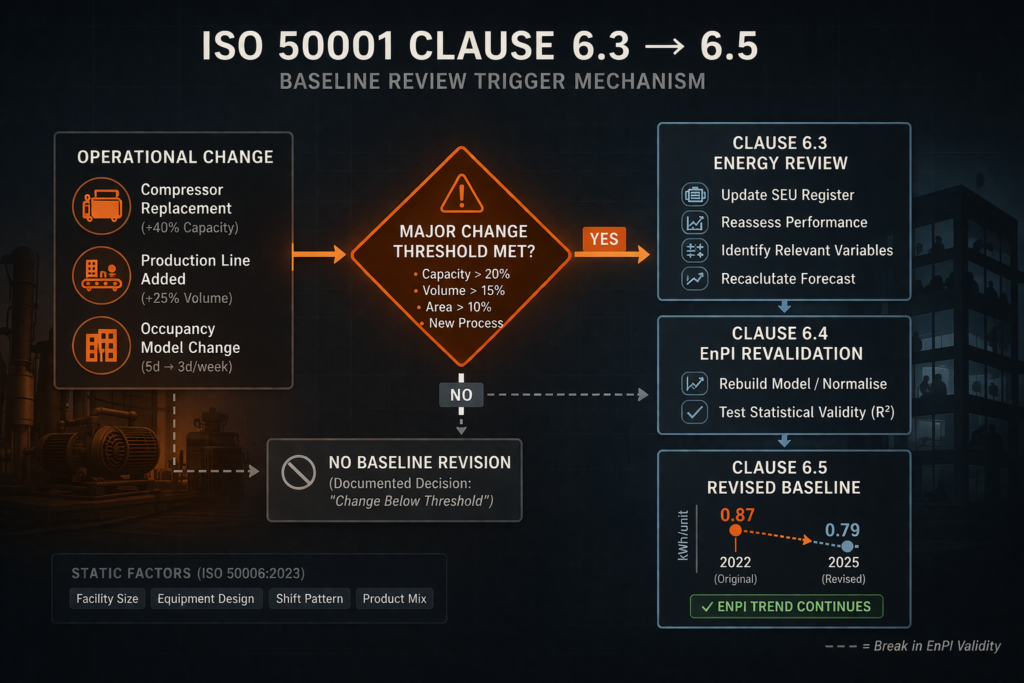

Start with Clause 6.3, the energy review. The organisation must conduct the review at defined intervals and whenever major changes occur: identify significant energy uses (SEUs), map the variables affecting their performance, assess current energy performance of each SEU, and estimate future consumption. ISO 50006:2023 Section 5.1 confirms that the energy review is the foundational input to all EnPI and energy baseline (EnB) determination. Skip the re-trigger after an operational change and every downstream metric is built on stale foundations.

Clause 6.4 covers EnPIs. The organisation must determine indicators appropriate for measuring and monitoring energy performance and for demonstrating continual improvement. Where relevant variables affect performance materially, EnPIs must account for them. The standard permits statistical, aggregated, or engineering-model approaches — ISO 50006:2023 Section 6.2.1 details all three — but prescribes no minimum statistical validity criteria. An EnPI expressed as energy per unit of output that shows apparent improvement solely because output volume increased, without a corresponding efficiency gain, is technically compliant if the methodology is documented. It is also technically meaningless.

Then there is the energy baseline itself. Clause 6.5 requires the organisation to establish an EnB using data from the energy review and to revise it when EnPIs no longer reflect energy performance, when major changes to static factors have occurred, or according to a predetermined method. ISO 50006:2023 defines “static factors” as factors that affect energy performance materially but do not routinely change: facility size, design of installed equipment, number of weekly shifts, range of products.

The critical word is “major.” Neither ISO 50001:2018 nor ISO 50006:2023 prescribes a quantitative threshold for what constitutes a major change. No percentage shift in equipment capacity, production volume, or floor area triggers a mandatory baseline reset. The determination is entirely delegated to the organisation.

That delegation is the design of the standard, not an oversight. But most organisations never define their own significance threshold. The result: “no baseline revision” becomes default inaction rather than a governed decision.

Where Organisations Fail on Baseline Revision

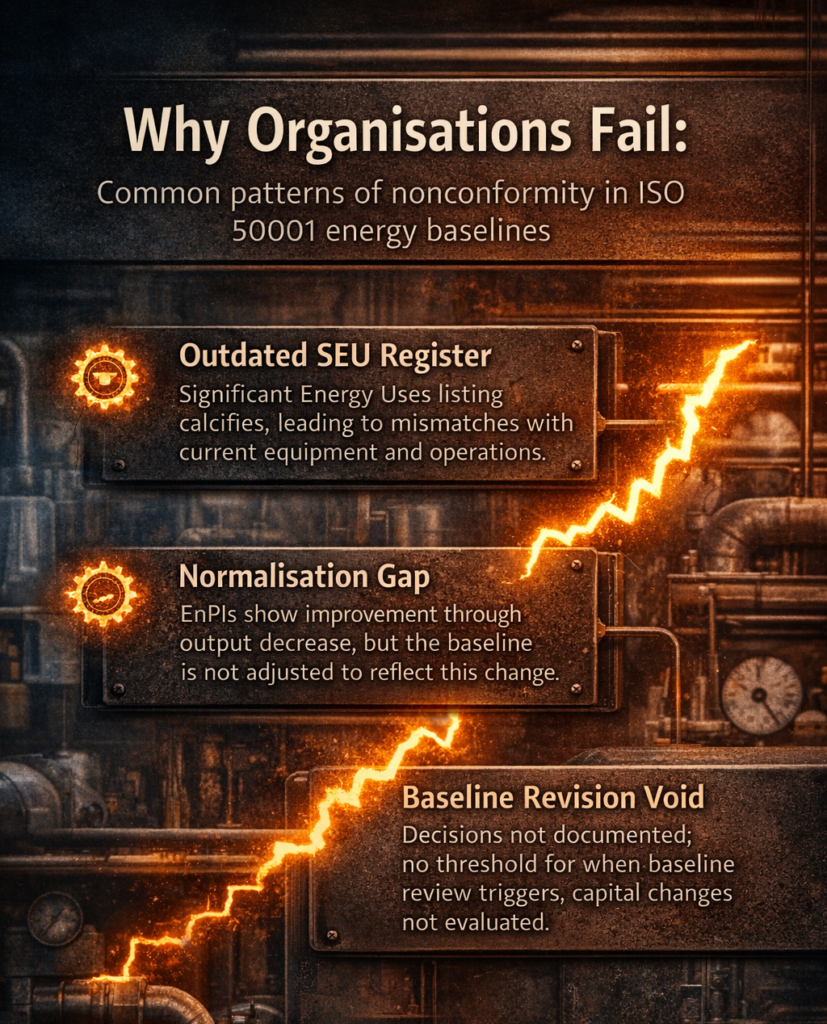

The nonconformity pattern is predictable. It shows up at surveillance and recertification audits with consistent frequency, and energy baseline drift is the common thread.

The SEU register calcifies. Organisations build it during initial certification, map consumption data, document the energy review — and then leave it untouched through equipment replacements, production line additions, and facility modifications. The energy review becomes a certification-stage activity rather than an operational management process. When auditors compare the SEU register against the current plant asset register during a year-two surveillance audit, the mismatch is visible. Equipment that no longer exists is still listed as an SEU. New high-consumption systems have no SEU designation. Auditors notice.

EnPI trend data is presented without context. During recertification, organisations present three years of trend data showing apparent performance improvement. Experienced auditors probe whether the improvement reflects genuine efficiency gains or operational changes. A manufacturing plant running at 60% of its baseline-year capacity will show energy intensity improvement that is entirely a utilisation artefact. Unless the organisation normalised the EnPI for production volume — or revised the baseline to reflect the lower output — the comparison is mathematically invalid. The standard doesn’t force normalisation. It requires EnPIs to be “appropriate.” The audit question is whether the organisation’s documented methodology supports that claim.

The baseline revision decision record doesn’t exist. This is the gap that converts an implementation weakness into an audit finding. If a capital project was recorded in the management system during the certification cycle — compressor replacement, facility extension, process line change — auditors expect a corresponding baseline review decision. Either “revision not required because the change did not meet our defined significance threshold” (which requires having a documented threshold) or evidence of a revised baseline with updated methodology. Absence of both is a nonconformity. The finding typically lands as a minor on Clause 6.5, but the systemic nature of the gap — touching 6.3, 6.4, and 6.5 simultaneously — can escalate to major if the entire EnPI reporting chain is affected.

The CSRD Assurance Problem

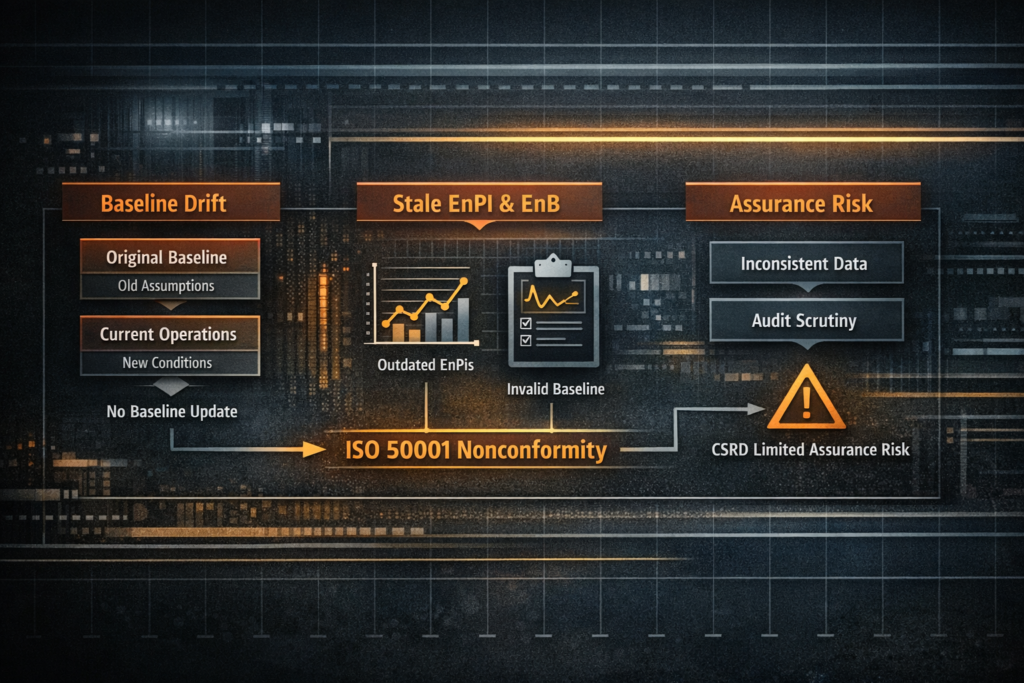

The same EnPI values that fail scrutiny at a recertification audit are, in many organisations, the source data for CSRD energy disclosures. That is where an ISO 50001 energy baseline drift problem becomes an external reporting risk.

ESRS E1-5 requires disclosure of total energy consumption, breakdown by renewable and non-renewable sources, and energy intensity ratios. The intensity ratio needs a denominator — net revenue or a physical output metric. Where ISO 50001 EnPIs feed directly into these disclosures, the consistency of the denominator definition across reporting periods determines whether the data is comparable. An undisclosed baseline revision — or an undisclosed absence of a required revision — is a methodology inconsistency.

The original CSRD timeline had limited assurance escalating to reasonable assurance. Following the EU Omnibus agreement in December 2025, that escalation has been removed. Limited assurance remains the requirement, with EU limited assurance standards expected by October 2026 and an amended ESRS delegated act anticipated for application from FY 2027.

What does limited assurance actually test? Under the IAASB ISSA 5000 framework, the assurance provider must conclude that nothing has come to their attention indicating material misstatement. The procedures include inquiry, analytical procedures, and limited testing of underlying data. A sustained methodology inconsistency — energy intensity ratios calculated against a baseline that no longer reflects operational conditions, with no documented revision decision — is exactly the pattern that analytical procedures surface. The assurance provider compares year-on-year energy intensity trends against disclosed operational changes. Production volume drops 30% but energy intensity improves 15%? The analytical relationship breaks down without a documented normalisation or baseline adjustment.

No published guidance — not from EFRAG, not from the IAASB, not from any ISO 50001 certification body — explicitly maps ISO 50001 EnPI baseline methodology documentation to CSRD ESRS E1 assurance criteria. The chain connecting Clause 6.5 baseline validity to ESRS E1-5 limited assurance must be constructed by inference across three separate normative frameworks. That the chain is inferential does not make it weak. Each link is individually well-grounded. But organisations waiting for an explicit regulatory instruction to fix their baselines will find that the assurance provider arrives before the guidance does.

The EU EED Recast Adds Compliance Pressure

EU Directive 2023/1791 creates a parallel obligation that reinforces the baseline problem. Enterprises consuming over 85 TJ per year (averaged over three years) must implement a certified energy management system by October 11, 2027 — ISO 50001 is explicitly recognised as the compliant route. Those in the 10–85 TJ range must conduct a qualified energy audit and publish an action plan in their annual report by October 11, 2026, repeating every four years.

The Directive mandates the system but does not specify EnPI recalibration requirements or data quality standards for the published action plan. It defers entirely to ISO 50001 for the certified EnMS route. The baseline drift problem that ISO 50001 doesn’t resolve gets inherited by the EED compliance mechanism: a certified EnMS with stale EnPIs satisfies the Directive’s letter while undermining the data quality that the publication obligation implicitly requires.

Member State transposition was due by October 11, 2025. National implementation varies — France’s AFNOR published transposition FAQ guidance in December 2025 indicating the process was still underway.

Sector-Specific Baseline Drift Variations

The energy baseline drift problem manifests differently depending on which variable drives energy performance.

Manufacturing

Production output volume is the primary relevant variable. A plant operating at 60% capacity versus its baseline year shows apparent energy intensity improvement that is entirely a utilisation artefact. ISO 50006:2023 Annexes D and E provide normalisation examples applicable to production volume. The specific audit risk: where a production line was decommissioned or added during the certification cycle and the SEU register was not updated, the EnPI boundary no longer matches the physical operational boundary.

Commercial Real Estate

Post-pandemic occupancy restructuring has created systematic baseline drift across office portfolios. Baselines set at pre-2020 full occupancy are still used to claim energy performance improvement at 50–60% occupancy. Occupancy rate functions as a static factor under ISO 50006:2023’s definition — a major change in scheduled occupancy pattern meets the Clause 6.5 revision criterion.

Data Centres

Power Usage Effectiveness (PUE) is the standard EnPI. When GPU-dense AI compute racks deploy at higher power density than the baseline IT load — rack density increasing from 5 kW to 20 kW — total facility energy increases while PUE may appear stable or improve. The IT load denominator shift is a static factor change requiring baseline review. The EED recast imposes additional disclosure obligations on data centres with IT loads above 500 kW.

Heavy Industry

Process heat efficiency in steel, cement, and petrochemical operations depends on feedstock composition and product mix. Changes in ore grade, clinker-to-cement ratio, or feed gas composition alter the energy-process relationship at a fundamental level. These changes occur incrementally — no single event triggers the “major change” threshold. Cumulative small shifts over a three-year certification cycle can produce material baseline drift without any single qualifying event. This is the hardest case for the self-determination problem.

Practical Steps: Building a Baseline Review Trigger Protocol

- Document an EnB Revision Significance Threshold (Month 1). Write a formal threshold definition under Clause 6.5 and link it to your change management procedure. Define what “major” means for your operations: rated equipment capacity change above a stated percentage of total SEU capacity, production volume deviation beyond a stated range from baseline average, facility area change above a stated proportion, new product category or feedstock type not present in the baseline period, occupancy structure shift above a stated change from baseline. The standard requires no specific values. The act of documenting them converts inaction into a governed decision.

- Integrate into change management (Months 1–2). Amend your operational change management procedure to include an EnB review checklist step whenever a capital expenditure, equipment procurement, or facility modification is approved. Assign the energy management representative or SEU owner as a required reviewer for any change approaching your thresholds. Record the output: either “threshold not met — no revision required” with documented reasoning, or “threshold met — revision initiated” with reference to the revised baseline record.

- Revalidate statistical EnPI models (Months 2–3, recurring). For regression-based EnPI models, establish a periodic revalidation schedule — minimum annually, and also triggered when your significance criteria are met. Document the R² value, residual analysis, and decision on continued model validity. ISO 50006:2023 does not prescribe minimum R² thresholds. Document your own adequacy criterion and retain it as documented information under Clause 7.5.

- Build the CSRD data lineage (Months 3–6). Map each ESRS E1-5 energy intensity data point to its source EnPI, with explicit reference to the applicable baseline period, any revisions made, and the revision decision record. Where baseline revisions occurred during the comparative period, prepare a methodology note for the sustainability report disclosing the revision and its effect on year-on-year comparability. The evidence chain from meter data through SEU record through EnPI calculation through baseline comparison to ESRS E1-5 disclosure must be traceable without gaps.

The ISO 50006 and ISO 50015 Support Framework

Two supporting standards provide methodology guidance that ISO 50001:2018 itself does not.

ISO 50006:2023 (second edition, May 2023) upgraded its normalisation guidance and added procedures for maintaining EnPIs and EnBs when static factors change (Section 9.2). It describes three EnPI model types and provides step-by-step normalisation in Annexes D through F. Section 9.2 directly addresses the scenario: if a statistical model is in use and a static factor changes, evaluate whether the change invalidates the existing regression relationship. Adjust the baseline or develop a new model.

But ISO 50006 is a guidance standard. Conformance to ISO 50001 does not require conformance to ISO 50006. An organisation can hold certification with an EnPI methodology that ISO 50006 would identify as statistically inadequate. No audit mechanism enforces the alignment.

ISO 50015:2014 establishes measurement and verification (M&V) principles: accuracy (uncertainty characterised), completeness (all relevant effects accounted for), and consistency (methods applied uniformly across periods). A non-normalised EnPI under changed conditions violates all three. The edition status of ISO 50015 is unconfirmed as of early 2026 — a revision would affect the M&V framework.

Clause reference reflects mapped standard requirement for ISO 50015:2014. Verify against current edition before audit use.

The Implementation Gap the Standard Doesn’t Close

ISO 50001:2018 Clause 6.5 requires baseline revision under stated conditions but prescribes no quantitative trigger thresholds. ISO 50006:2023 provides methodology for normalisation and static factor evaluation but prescribes no statistical validity criteria. No IAF mandatory document, no CB guidance note from BSI, DNV, TÜV, or Bureau Veritas, and no national accreditation body publication provides a decision matrix for when an operational change makes an EnPI statistically invalid. The CSRD and ISSA 5000 require methodology consistency but do not reference ISO 50001 baseline documentation specifically.

Every link in the chain is individually sound. No single document connects them.

Organisations operating in this gap are not non-compliant. They are compliant with each framework independently while producing energy performance data that will not survive cross-framework scrutiny. The fix is procedural, not technical. Document your significance thresholds. Integrate baseline review into change management. Build the data lineage before the assurance provider asks for it.

The regulatory instruction you’re waiting for may never arrive in the form you expect. The assurance engagement will.

This content is for educational purposes only and does not constitute certification, legal, or regulatory advice. Organisations should consult qualified professionals for implementation specific to their context.

Clause mapping reflects common audit practice. Verify with your certification body for specific expectations.

About AEC International

AEC International provides ISO certification, training, and consultancy services at the intersection of energy management, environmental compliance, and ESG reporting. We support organisations across industries in achieving and maintaining ISO certification — from gap analysis and implementation through audit preparation and continual improvement.